[8 minutes]

Fig 1. Sound wave representation (Source: GDJ, n.d.)

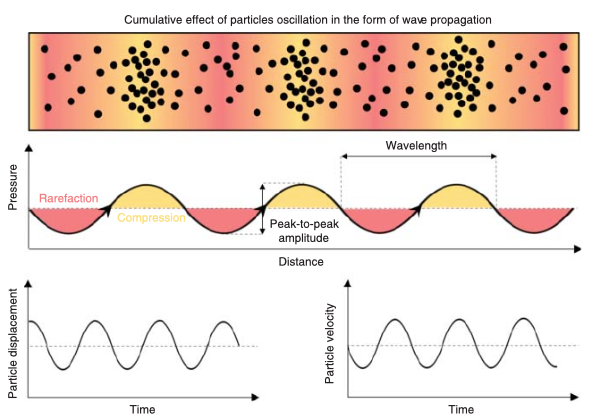

Sound is a physical phenomenon, a form of energy generated by vibrations caused by the movement of an object. As an object vibrates, it induces movement in the surrounding particles, causing them to oscillate. These particles collide with adjacent ones, creating a chain reaction that propagates through the medium as a longitudinal wave, transferring energy from molecule to molecule. The properties of a sound wave vary depending on the medium through which it propagates: in air, sound travels at approximately 340 m/s, and in denser media, such as water or solids, sound travels faster. In everyday life, we are constantly exposed to a wide range of sounds, which may consist of a set or single unstructured acoustic events (such as a door slamming or an object falling) – often referred to as tones (Sahin et al., 2023).

Interestingly, in environments where particles are extremely sparse, such as outer space, sound cannot propagate in the way we experience it on Earth. Although space is not a perfect vacuum and contains diffuse matter, the density is so low that sound waves cannot travel effectively, which is why the well-known phrase “in space no one can hear you scream” is, from a physical perspective, almost true (Impey, 2023).

Fig.2 .Particle oscillation in a medium – acoustic wave propagation (Source: Adapted from Sahin et al. 2023)

Frequency, Pitch and Loudness

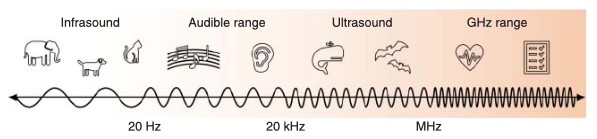

When these vibrations are fast enough and fall within the range of our hearing, we hear a sound. The number of vibrations per second is called frequency and is measured in Hertz (Hz). The human auditory system is capable of detecting frequencies between approximately 20 and 20.000 vibrations per second (Hertz). Vibrations below this threshold are too slow to be perceived (for example, the movement of a waving hand), while those above it, exceed the limits of human hearing (Sahin et al., 2023).

However, human hearing is relatively limited compared to other species. Some animals perceive frequencies far beyond our range: bats can detect ultrasonic frequencies exceeding 100,000 Hz, while elephants communicate using infrasonic sounds as low as 16 Hz, allowing them to transmit signals across several kilometres (IFAW, 2024).

Fig.3 Categorisation of acoustic waves based on frequency range (Source: Adapted from Sahin et al. 2023)

Pitch and frequency are related but not the same thing. Frequency is an objective scientific measure of a sound wave vibrations – how many times an object vibrates per second. However, sound waves do not have pitch – this results from the human brain’s interpretation of those vibrations. Pitch is determined by the mass of the vibrating object; the greater the mass, the slower it vibrates and lower the pitch, and it can also be influenced by the tension and stiffness of the object (Sahin et al., 2023). For example, when plucking the thick low E string of a bass guitar, the string vibrates at a relatively low frequency (approximately 41 Hz), producing a deep, low-pitched sound. In contrast, the thinner G string vibrates at a significantly higher frequency, resulting in a higher pitch. Although the underlying mechanism is the same, differences in string diameter and tension lead to variations in vibrational frequency and, consequently, perceived pitch.

At the extremes of sound, the relationship between vibration and perception becomes even more striking. Astrophysicists have “sonified” pressure waves from the supermassive black hole in the Perseus cluster, revealing a deep B-flat that lies about 57 octaves below middle C – far below any pitch a human could ever hear (NASA Chandra, n.d.). Similarly, the lowest recorded human vocal note, around 0.189 Hz, is so low that it is perceived more as vibration than sound. (Guinness World Records, 2012)

The loudness (perceived volume) is determined by the amount of pressure packed behind the vibration that triggered the sound. When you softly press a piano key, the hammer hits the string gently which makes it vibrate just a little, producing a quiet sound. If you press the key harder, the hammer strikes the string with more force, causing the string to vibrate strongly and creating bigger pressure changes in the air, which we hear as a louder sound. The scale of sound intensity in nature can be extreme. One of the loudest recorded natural events was the 1883 eruption of Krakatoa, whose explosion produced the equivalent force of a 200-megaton explosion, around four times more powerful than the largest thermonuclear device ever detonated by humans, the Tsar Bomba. The sound was so intense that it travelled around the Earth multiple times before dissipating (American Academy of Audiology, 2024).

The Auditory Pathway

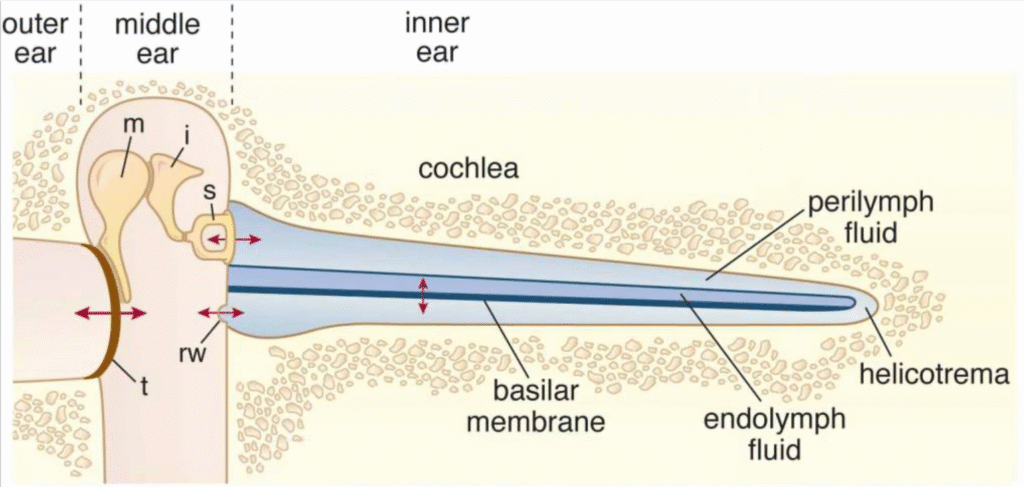

When sound waves enter your outer ear, they travel through the ear canal, causing the tympanic membrane (eardrum) to vibrate. This vibration is sent and mechanically amplified by the ossicles – three small bones located in the middle ear – and transmitted to the cochlea, a fluid-filled spiral structure in the inner ear. Inside the cochlea, hair cells sitting on the basilar membrane are arranged in a tonotopic map, so that different positions respond preferentially to different frequencies (Fettiplace, 2023).These highly specialized sensory cells are protected within the petrous part of the temporal bone, one of the densest structures in the human body. Despite this protection, once damaged, these hair cells have almost no capacity to regenerate in mammals, making hearing loss often irreversible. (Pinhasi et al., 2015; Karmitsa and Laos, 2017)

Fig.5 Schematic of the sound transmission pathway from the eardrum to the cochlea. Source: Adapted from Fettiplace (2017)

These hair cells convert mechanical vibrations into electrical signals (mechanoelectrical transduction), which are then passed to auditory nerve fibres and subsequently to the primary auditory cortex, where basic properties such as pitch, intensity, and temporal patterns are decoded. These electrical signals then travel throughout the brain where, they are further processed and integrated with perception, memory, emotion, and movement. (Levitin, 2006; Fettiplace, 2017)

Notably, listening to music perceived as pleasurable can trigger the release of dopamine in reward-related areas such as the ventral striatum and nucleus accumbens, the same neural systems involved in other rewarding experiences, including food and social bonding. (Gold et al., 2013; Ferreri et al., 2019)

When Sound becomes Music

Not all sound becomes music. Music emerges when sound is organised with intention, shaped in time, structured through rhythm, pitch and harmony, and perceived as meaningful by a listener. Unlike random environmental noise, musical sound follows patterns that invite recognition and prediction; our brains naturally synchronise with these patterns, aligning internal rhythms to the beat and structure of the music. This predictive engagement is closely linked to the brain’s reward system. Research suggests that the same neural circuits that respond to primary rewards are activated by musical expectation and resolution, which may explain why certain musical moments evoke chills or create a strong sense of anticipation. (Gold et al., 2013; Ferreri et al., 2019)

Through culture and experience, we learn to interpret these patterns emotionally and aesthetically, transforming physical vibrations into something expressive and shared. In this sense, music may be understood not as something that exists independently in the air, but as something that emerges in the brain, where sound is organised, interpreted, and given meaning. (Wilkins et al., 2014; Reybrouck et al., 2018)

REFERENCES

American Academy of Audiology (2024) ‘The loudest known sound ever!’, American Academy of Audiology, 23 January. Available at: https://www.audiology.org/the-loudest-known-sound-ever/

Ferreri, L. et al. (2019) ‘Dopamine modulates the reward experiences elicited by music’, Proceedings of the National Academy of Sciences (PNAS), 116(9), pp. 3793–3798. doi:10.1073/pnas.1811878116. Available at: https://www.pnas.org/doi/10.1073/pnas.1811878116

Fettiplace, R. (2017) ‘Hair cell transduction, tuning and synaptic transmission in the mammalian cochlea’, Comprehensive Physiology, 7(4), pp. 1197–1227. doi:10.1002/cphy.c160049. Available at: https://pmc.ncbi.nlm.nih.gov/articles/PMC5658794/

Fettiplace, R. (2023) ‘Cochlear tonotopy from proteins to perception’, BioEssays, 45(8), 2300058. doi:10.1002/bies.202300058. Available at: https://pubmed.ncbi.nlm.nih.gov/37329318/

Gold, B.P. et al. (2013) ‘Pleasurable music affects reinforcement learning according to the listener’, Frontiers in Psychology, 4, 541. doi:10.3389/fpsyg.2013.00541. Available at: https://www.frontiersin.org/journals/psychology/articles/10.3389/fpsyg.2013.00541/full

Guinness World Records (2012) ‘Lowest vocal note by a male’. Available at: https://www.guinnessworldrecords.com/world-records/lowest-vocal-note-by-a-male

IFAW (2024) ‘Animals with the best hearing in the world’, International Fund for Animal Welfare, 19 April. Available at: https://www.ifaw.org/international/journal/animals-best-hearing-world

Impey, C. (2023) ‘Why isn’t there any sound in space? An astronomer explains why in space no one can hear you scream’, The Conversation, 4 December. Available at: https://theconversation.com/why-isnt-there-any-sound-in-space-an-astronomer-explains-why-in-space-no-one-can-hear-you-scream-217885

Levitin, D.J. (2006) ‘This is your brain on music: The science of a human obsession.’ New York: Dutton/Penguin Group.

Karmitsa, E. and Laos, M. (2017) ‘Inner ear hair cells are hard to regenerate’, University of Helsinki News, 8 September. Available at: https://www.helsinki.fi/en/news/healthier-world/inner-ear-hair-cells-are-hard-regenerate

NASA Chandra (n.d.) ‘Perseus cluster sonification’, Chandra X-ray Observatory. Available at: https://chandra.si.edu/sound/perseus.html

Pinhasi, R. et al. (2015) ‘Optimal ancient DNA yields from the inner ear part of the human petrous bone’, PLoS One, 10(6), e0129102. doi:10.1371/journal.pone.0129102. Available at: https://pmc.ncbi.nlm.nih.gov/articles/PMC4472748/

Reybrouck, M., Vuust, P. & Brattico, E. (2018) ‘Brain connectivity networks and the aesthetic experience of music’, Brain Sciences, 8(6), 107. doi:10.3390/brainsci8060107. Available at: https://pmc.ncbi.nlm.nih.gov/articles/PMC6025331/

Sahin, Mehmet & Ali, Mushtaq & Park, Jinsoo & Destgeer, Ghulam. (2023). Fundamentals of Acoustic Wave Generation and Propagation. 10.1002/9783527841325.ch1. Available at: https://www.researchgate.net/publication/374539079_Fundamentals_of_Acoustic_Wave_Generation_and_Propagation

Wilkins, R.W., Hodges, D.A., Laurienti, P.J., Steen, M. & Burdette, J.H. (2014) ‘Network science and the effects of music preference on functional brain connectivity: From Beethoven to Eminem’, Scientific Reports, 4, 6130. doi:10.1038/srep06130. Available at: https://pmc.ncbi.nlm.nih.gov/articles/PMC5385828/